The Algorithm Isn’t Neutral: How Social Media Amplifies or Silences Latina Creators—and What to Do About It

There’s an experience many Latina content creators in the U.S. know firsthand, even if they rarely name it this way. You post content you know is good. It’s relevant. It connects with your audience. And yet, the reach is minimal, the views don’t come, the content simply doesn’t circulate.

Meanwhile, you see lower-quality content—with less care, less depth, less real information—blow up in reach and engagement. And you wonder what’s happening. If you did something wrong. If the algorithm has something against you.

The uncomfortable truth is: the algorithm doesn’t have a personal issue with you. But it does have structural biases. And those biases disproportionately affect Latina creators, Black creators, those who create in languages other than English, and those building communities around identities that platforms didn’t prioritize in their original design.

This isn’t paranoia. It’s the result of years of research, leaked internal reports, and documented testimonies from creators who have experienced exactly this pattern.

What an algorithm is—and why it’s not neutral

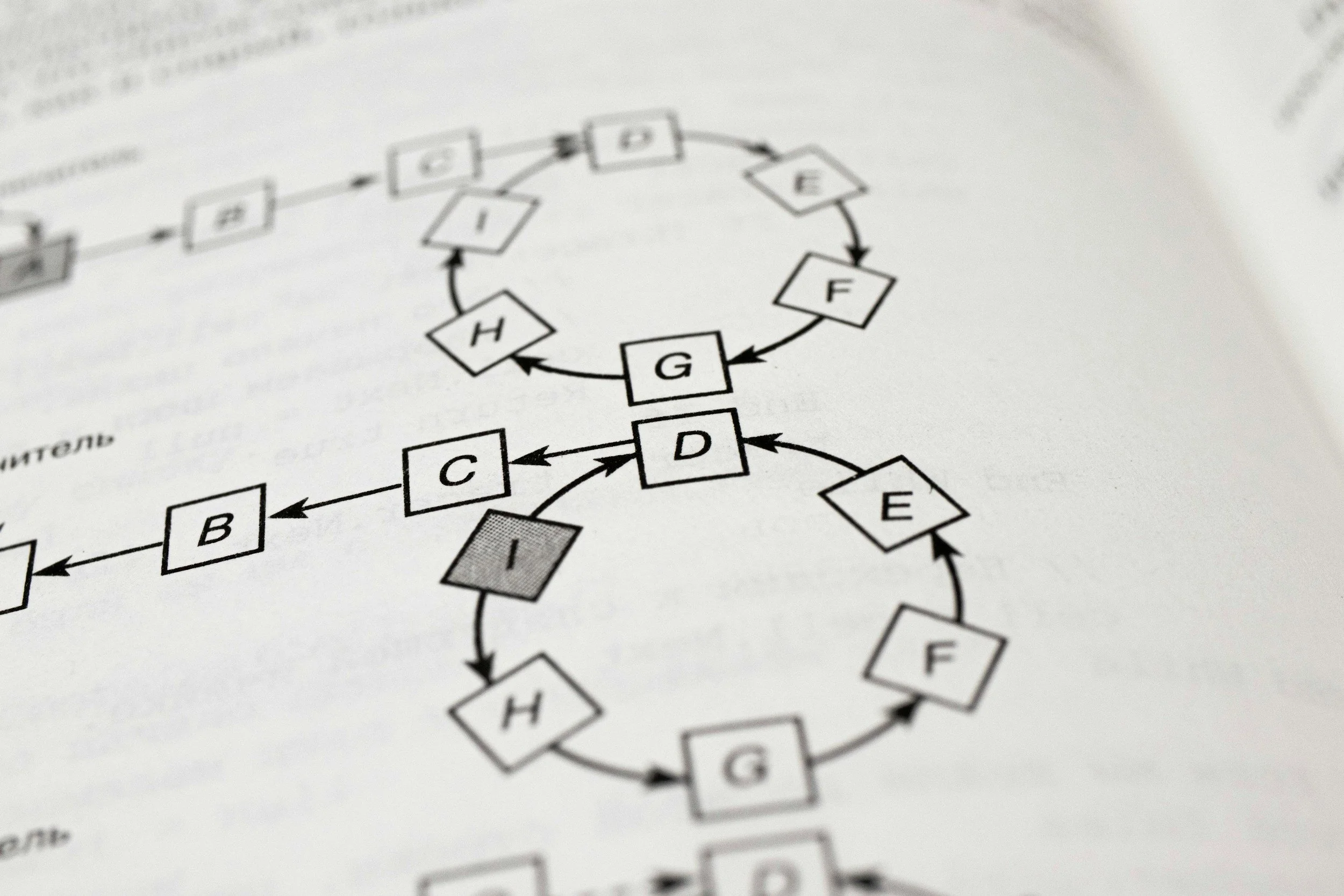

An algorithm is simply a set of instructions a computer follows to make decisions. In social media, those instructions determine what content is shown, to whom, in what order, how often, and how widely it’s amplified.

The algorithms behind Instagram, TikTok, Facebook, and YouTube weren’t explicitly designed to discriminate. They were designed to maximize user time on the platform and exposure to ads.

But the data they were trained on—the historical behavior of users—already reflected real-world patterns, including bias.

As a result, algorithms learned to amplify content that historically generated the most engagement. And that content was already skewed toward certain creators, aesthetics, and languages—primarily English.

So when a Latina creator posts in Spanish, or mixes Spanish and English, or speaks to a niche cultural audience, she’s operating at a structural disadvantage in a system that wasn’t built with her in mind.

The evidence platforms prefer not to discuss

In 2020, Instagram publicly acknowledged that its hashtag algorithm was suppressing activist and marginalized community content. While it framed it as an unintentional moderation error, the disproportionate impact on Black and Latina creators was clear.

In 2021, leaked internal Facebook documents revealed that the company knew its moderation systems applied different standards depending on language—leading to more false positives for Spanish content, which meant more content was wrongly flagged or suppressed.

TikTok faced similar accusations in 2020 when documents suggested early policies that limited visibility for users based on physical traits, including weight and race.

This doesn’t prove a coordinated conspiracy. It reveals something more complex—and harder to fix: automated systems replicate and amplify the biases present in the data they’re built on.

And that data reflects the world as it is—not as it should be.

How invisibility actually works

Algorithmic suppression is rarely absolute. It’s not that your content isn’t shown at all—it’s shown less than it should be, amplified less beyond your immediate audience, and receives less system support to reach new people.

This shows up in real ways:

Shadow banning

Content stops appearing in search or explore pages without notice. Your account looks normal—but reach drops dramatically. Platforms rarely acknowledge it officially.

Uneven monetization

Topics more relevant to Latina communities—migration, identity, activism—often receive less monetization than other content, discouraging creators from covering them.

Unequal distribution

Two similar pieces of content—one from an Anglo creator, one from a Latina creator—can receive very different reach, even if all measurable factors are equal.

What you can control: strategies that work

Knowing the system has bias doesn’t mean you’re powerless. It means you need to be strategic.

Diversify your platforms intentionally

The biggest mistake is building your entire audience on one platform. Algorithms change. Accounts get penalized.

Use social media for discovery—but move your audience to spaces you control:

Email lists

Private groups

Your own website

Email, especially, is your most valuable asset. No algorithm controls it.

Understand each platform’s algorithm

Each one prioritizes different signals:

Instagram (2026): favors Reels, watch time, and comments over likes

TikTok: prioritizes completion rate and early engagement

YouTube: values total watch time and click-through rate

Create content aligned with these signals without losing your essence.

Build active community, not passive audience

Algorithms reward conversation.

100 meaningful comments > 1,000 likes.

Ask real questions. Invite opinions. Create content that sparks dialogue.

Use the first minutes strategically

Early engagement matters.

Post when your audience is active

Respond quickly to comments

Let your audience know when you publish

This boosts initial signals and increases reach.

Collaborate with other Latina creators

Collaboration multiplies reach.

When you connect audiences, algorithms recognize the relationship and expand visibility. This kind of growth doesn’t depend on platform favor—it’s built on real community.

What platforms should do—but still don’t

There are ongoing conversations about making algorithms more equitable and transparent.

Some platforms have:

Announced diversity initiatives

Created funds for underrepresented creators

Promised audits of moderation systems

These steps are still not enough.

Real change has come from pressure—creators documenting suppression, participating in feedback programs, supporting transparency laws, and organizing collectively.

The algorithm does not define your value

Let’s be very clear about this.

The reach your content gets is not a measure of its value.

It’s not a measure of your quality.

It’s not a measure of how important your voice is.

It’s a measure of how a system—with specific goals and biases—responds to what you create.

That says something about the system.

It says nothing about you.

Latina creators who are building real communities, generating meaningful conversations, and creating content their audience truly needs—even when the algorithm doesn’t amplify it—are doing something no algorithm can take away:

They are becoming irreplaceable to the people who follow them.

That may not show up in reach metrics.

But it’s exactly what lasts.